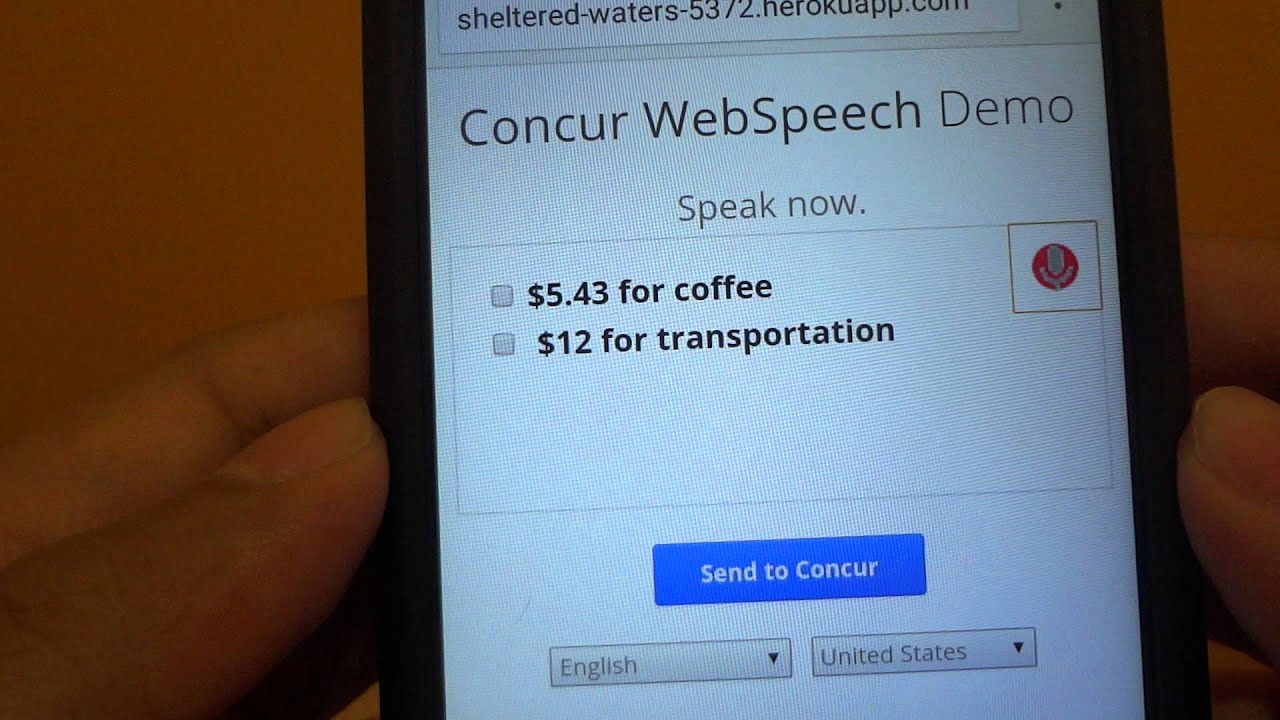

Prior to sending the data to Google, however, Mozilla routes it through our own server's proxy first, in part to strip it of user identity information. Google leads the industry in this space and has speech recognition in 120 languages. Currently we are sending audio to Google’s Cloud Speech-to-Text. If you your purpose is to develop using the API, you can find the documentation on MDN.įirefox can specify which server receives the audio data inputted by the users. Then navigate to a website that makes use of the API, like Google Translate, for example, select a language, click the microphone and say something. Then type about:config in your address bar, search for the and _enable preferences and make sure they are set as true. The speech recognizer decodes the audio and sends a transcript back down to the browser to display on a page as text.įirst make sure you are running Firefox Nightly newer than 72.0a1 (). Once they’ve finished their utterance, the browser passes the audio to a server, where it is run through a speech recognition engine. Then they can input what they want to say (an utterance). When they click it, they will be prompted to grant temporary permission for the browser to access the microphone. It’s up to individual sites to determine how voice is integrated in their experience, how it is triggered and how to display recognition results.Īs an example, a user might see a microphone button in a text field. When a user visits a speech-enabled website, they will use that site’s UI to start the process. We’ve therefore included the work needed to start closing this gap among our 2019 OKRs for Firefox, beginning with providing WebSpeech API support in Firefox Nightly. If nothing else, our lack of support for voice experiences is a web compatibility issue that will only become more of a handicap as voice becomes more prevalent on the web. We can also offer a more private speech experience, as we do not keep identifiable information along with users’ audio recordings. It helps users because they will be able to take advantage of speech-enabled web experiences on any browser they choose.

As speech input becomes more prevalent, it helps developers to have a consistent way to implement it on the web. Some examples of this include Duolingo, Google Translate, (for voice search).Ĭhrome, Edge, Safari and Opera support a form of this API currently for Speech-to-text, which means sites that rely on it work in those browsers, but not in Firefox. The speech recognition part of the WebSpeech API allows websites to enable speech input within their experiences. WebSpeech API - Speech Recognition Frequently Asked Questions What is it? 1.1.17 Have a question not addressed here?.1.1.16 Are you adding voice commands to Firefox?.1.1.15 Why are we holding WebSpeech support in Nightly?.1.1.12 How can I test with Deep Speech?.1.1.10 Can we not send audio to Google?.Which part does the current WebSpeech work cover? 1.1.9 There are three parts to this process - the website, the browser and the server.1.1.8 How does your proxy server work? Why do we have it?.

1.1.7 Where are our servers and who manages it?.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed